AI powered voice-to-voice Reading Comprehension

An Educational Technology Company

Client

An educational technology company specializing in adaptive learning platforms for struggling readers. The organization delivers innovative software solutions designed to help students develop literacy skills through personalized, engaging reading practice integrated with real-time comprehension assessment and conversational AI tutoring.

Challenge

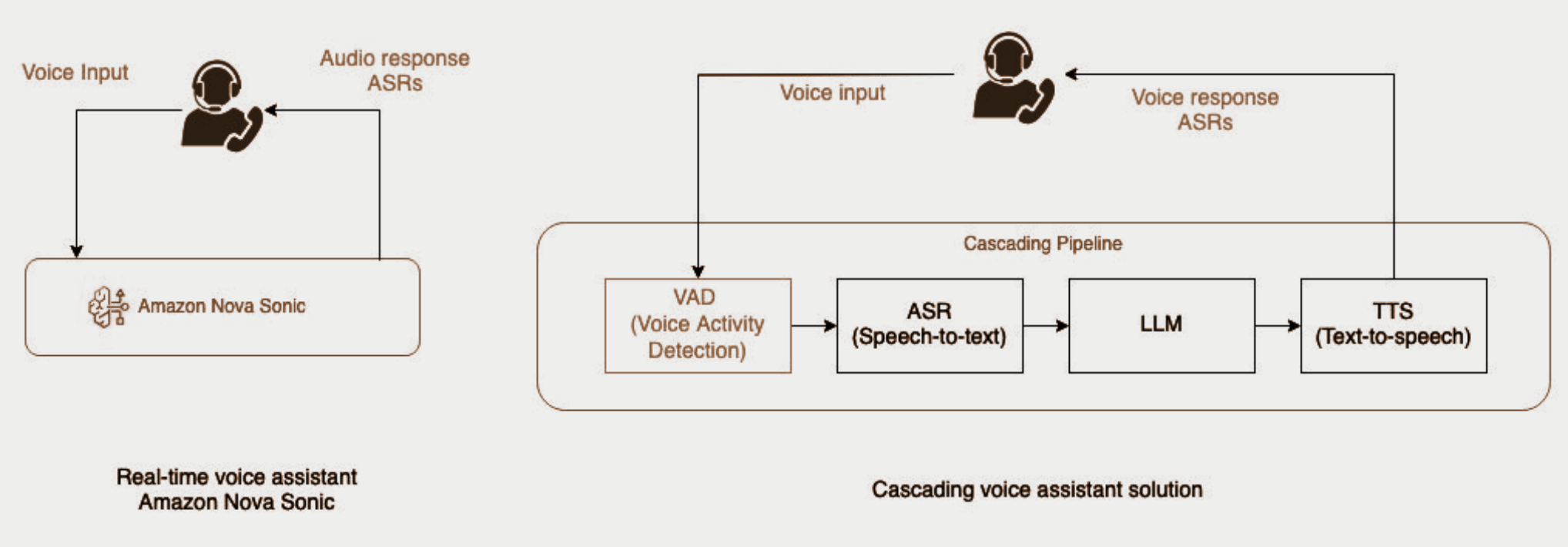

The client required a real-time voice-to-voice conversational system to enhance post-reading comprehension sessions. Students needed to engage in natural dialogue with AI tutors immediately after reading, but traditional architectures cascading through sequential speech-to-text, language processing, and text-to-speech stages introduced multi-second latencies that destroyed conversational naturalness and student engagement.

- Multi-stage cascading processingcreated unacceptable response delays, making conversations feel robotic and disconnected

- Traditional STT-LLM-TTS pipelinesrequired sequential completion of each stage, preventing simultaneous audio input and output

- Infrastructure complexity and scaling challenges with containerized approaches limited concurrent session support and increased operational overhead

- Without a low-latency, scalable solution, the platform could not deliver the natural, responsive interactions essential for effective literacy instruction

Key Results

- Achieved sub-2 second end-to-end response times, delivering 65% latency reduction compared to traditional approaches - enabling natural, fluid conversations indistinguishable from real human tutoring.

- Reduced per-session infrastructure costs to $0.0074 through serverless deployment while scaling to Thousands of concurrent sessions with zero operational management overhead.

- Enabled real-time personalization with student context injection including live progress tracking, course or book specific content, and adaptive learning paths maintained during conversation.

- Automated transcript capture and compliance logging providing educators with session records and analytics for instructional review and student progress assessment.

Solution

Native Voice-to-Voice Model Architecture: The foundation of the solution leverages Amazon Bedrock's Nova Sonic model, which implements native voice-to-voice processing with embedded speech-to-text, language understanding, reasoning, and text-to-speech capabilities integrated within a single AI model. Unlike traditional cascading architectures requiring multiple sequential API calls, Nova Sonic accepts continuous streaming audio input and processes meaning end-to-end without artificial processing boundaries. The model performs simultaneous turn detection and response generation, streaming output audio back while continuing to receive input. This internal parallelization within the model architecture fundamentally eliminates the latency penalties of multi-stage pipelines, delivering genuine natural dialogue.

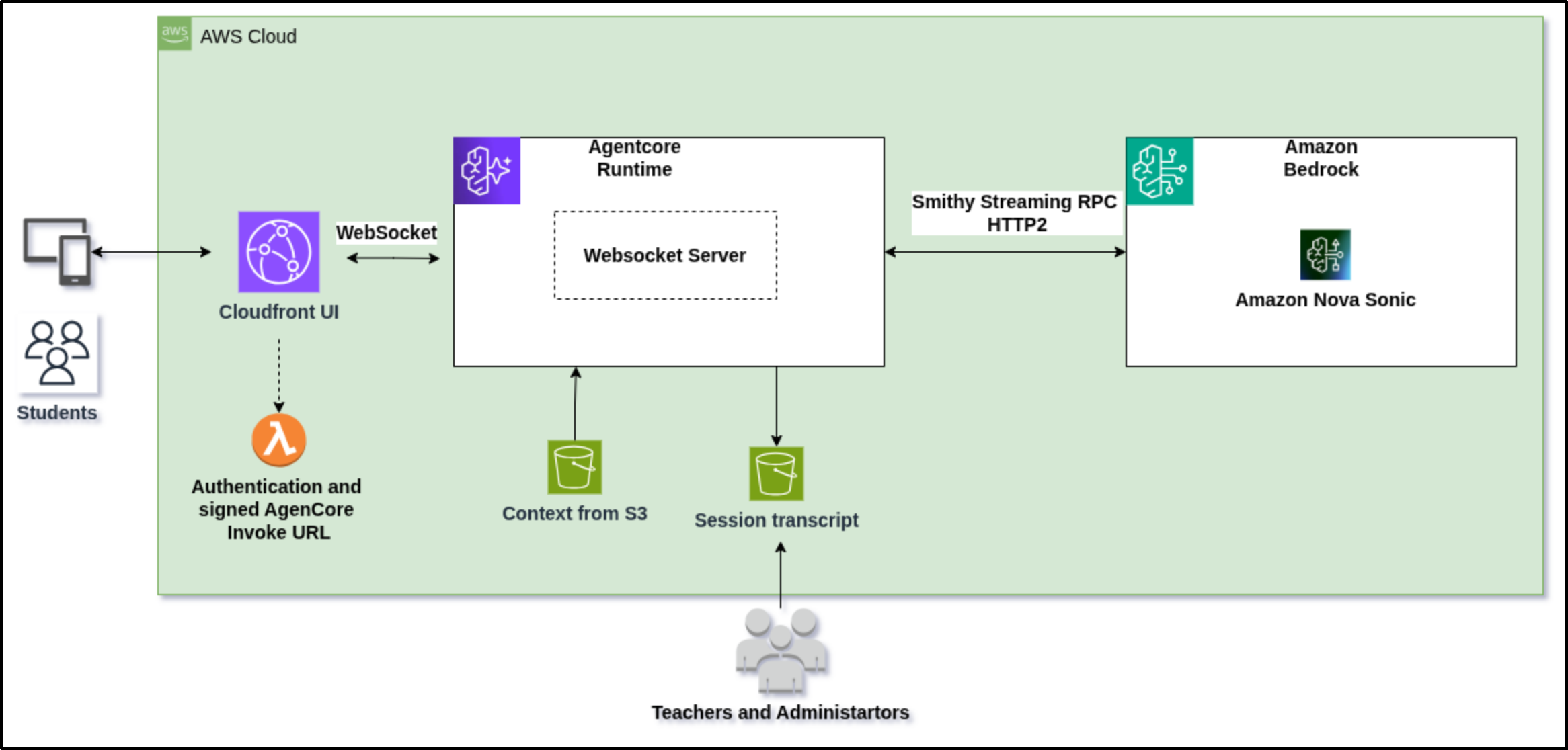

Serverless Infrastructure on AWS AgentCore Runtime: The application deploys as a native Python WebSocket service directly on Amazon Bedrock AgentCore Runtime - a fully managed, serverless compute environment purpose-built for AI agent execution. Each student session runs in an isolated microVM with dedicated CPU, memory, and filesystem resources, eliminating resource contention and providing guaranteed performance isolation. AgentCore automatically scales from zero to 1,000+ concurrent sessions per AWS account without infrastructure provisioning, container orchestration, or operational management. This eliminates the complexity of traditional containerized deployments while reducing operational costs by orders of magnitude and enabling the platform to scale globally without backend infrastructure changes.

Intelligent Session Control and Real-Time Context Integration: A FastAPI server running within AgentCore Runtime intercepts bidirectional WebSocket events flowing between client and Nova Sonic, enabling operational control and context injection without disrupting audio streaming. The system supports tool-based data fetching for real-time information needs and direct server-side calls for session lifecycle management. Student profiles, reading levels, and course context load at session start, enabling Nova Sonic to adapt conversation difficulty to individual student needs. All conversations are transcribed, stored with metadata, and made available for educator review and learning outcome measurement.

Secure Multi-Tenant Session Architecture: WebSocket connections to AgentCore Runtime pass through AWS signature-based authentication (SigV4 presigned URLs), ensuring only authorized users initiate sessions. A Lambda function generates presigned URLs after validating student JWT tokens from the authentication layer. Each session runs in an isolated microVM with dedicated resources, providing complete multi-tenant isolation and data separation without shared infrastructure overhead. This architecture maintains institutional compliance while enabling seamless student experiences without additional login friction or data exposure risks.

Learning

- Manual annotation improved dataset precision and detection quality.

- YOLO provided fast, accurate detection with minimal post-processing.

- ONNX conversion made deployment lightweight and cross-compatible.

Technologies Used

- AWS SageMaker– Used for training, validation, and deployment of the YOLO and VGG16 models with GPU instance management.

- Amazon S3– Serves as the centralized storage for datasets, model artifacts, and inference outputs.

- AWS Lambda– Executes the end-to-end inference pipeline, integrating detection and classification models.

- API Gateway– Provides secure endpoints to trigger and manage real-time inference requests.

- YOLO (PyTorch → ONNX)– Detection model (GP) for identifying lesions and generating bounding box coordinates.

- VGG16 (TensorFlow / Keras)– Classification model (SP) for categorizing lesions into severity levels.

- SageMaker Ground Truth (Manual)– Enables manual annotation of dermatological images for high-quality training data.

#arocom #artificialintelligence #machinelearning #datascience